Machine Learning AI Plays Video Game

Plus: Revisiting Computational Complexity and some UE Learning

Before I go into more lengthy subjects, I thought to give some hints on learning Unreal Engine.

Unreal Engine Pathways

Last December I put up a site where I collect links of interesting Unreal Engine tutorials and other learning material. I decided to put this up, since I have heard so many times this question “how can I learn Unreal?”. There are lots of free material available, but it is not always obvious where to start. This Unreal Pathways www-site tries to help in this matter.

This is a collection of curated links, with some explanations and additional hints on how to approach this subject. The site itself does not contain tutorials, it is basically just a portal.

AI plays ROT-8 Ballgame with Reinforcement Learning and Generative Adversarial Networks in a Competitive Environment

The goal of this project is to experiment how Artificial Intelligence (AI) can learn to play ROT-8 Ballgame using the same inputs as a human player, and perceiving the game like a human player. This also tests how AI can manage teamwork with other individual AI players and compete against other AI teams.

ROT-8 Ballgame is a game mode in ROT-8 Rover game we are developing simultaneously with Planetrism game. As a developer of the game, I have full access to the game source, and because the game is developed on Unreal Engine, I have full access to engine source code as well. Therefore I could fork the project, and develop a separate version of the game - suitable for machine learning experiments - with suitable APIs that AI can use to get a video feed from the game and to give control input in the same way a human player would do.

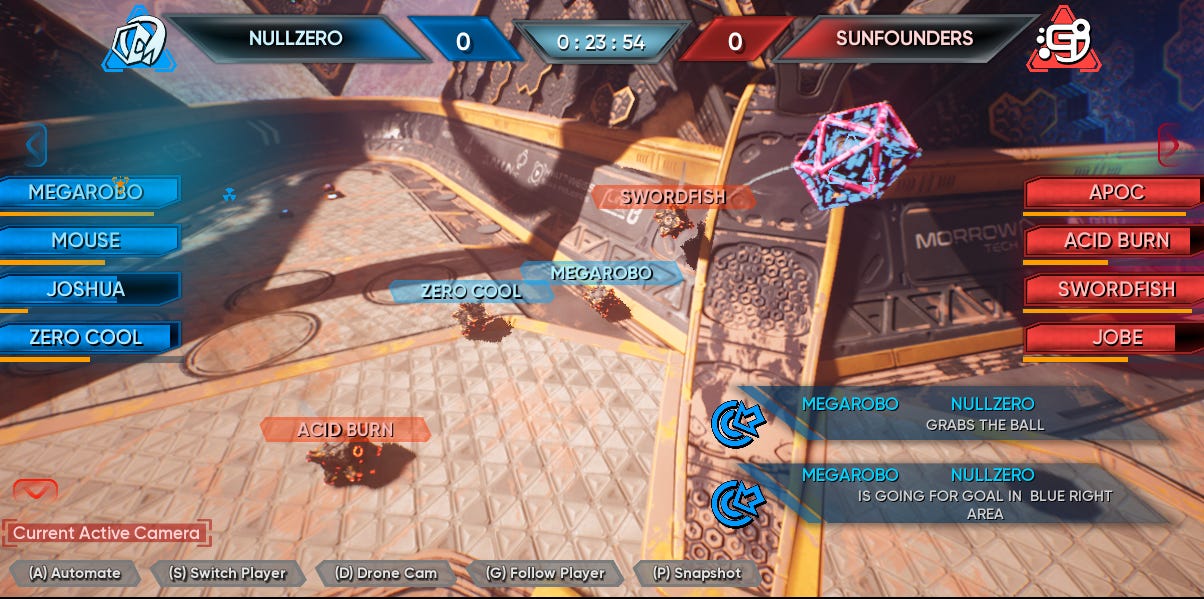

The game itself is a 3D ballgame, where players guide cube-shaped robots, that move by rotating and are capable of grabbing and tossing objects. Robots have also limited jump and hover capabilities. The player controls the robot through third-person view, and get various information on the user interface. Game is played in two teams, and players try to toss the ball through a ring-shaped goal and score points. You can see an excerpt from play (in spectator view) as a video

AI Gameplay in YouTube

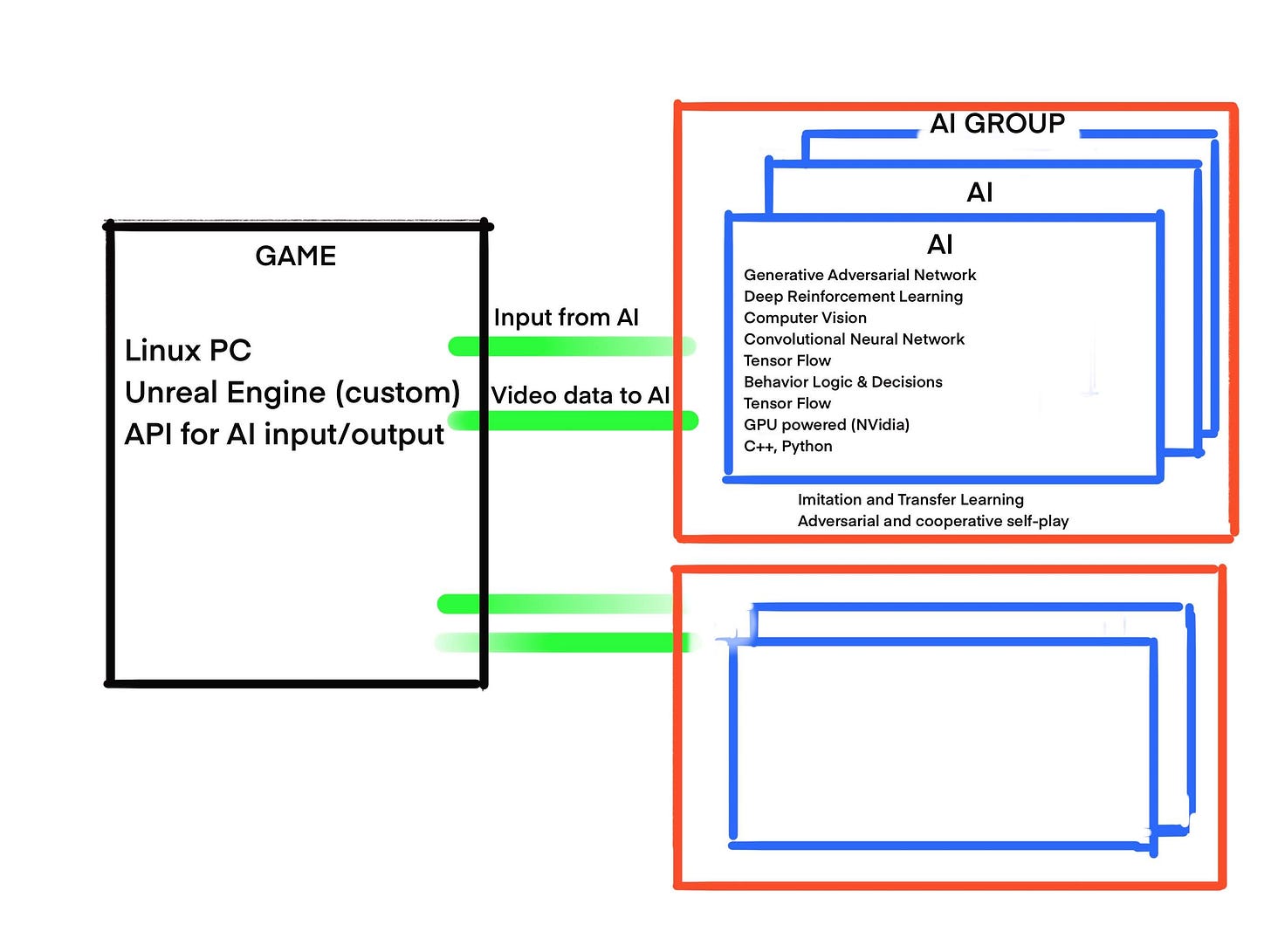

The test setup is as follows. The game itself is run on a Linux PC, with Unreal Engine modified and compiled from source code. Each AI is a separate process on different Linux PCs, and they use NVidia GPUs to boost their machine learning process. AI itself is a combination of C++ and Python based custom built software which connects to the game.

Technically, each AI uses Generative Adversarial Networks (GAN) and Deep Reinforcement Learning (DRL), but this group of AI also competes with each other and subgroups work as a team, too. Each AI may share anything it has learned with others, and they are capable of imitating others and learn from that.

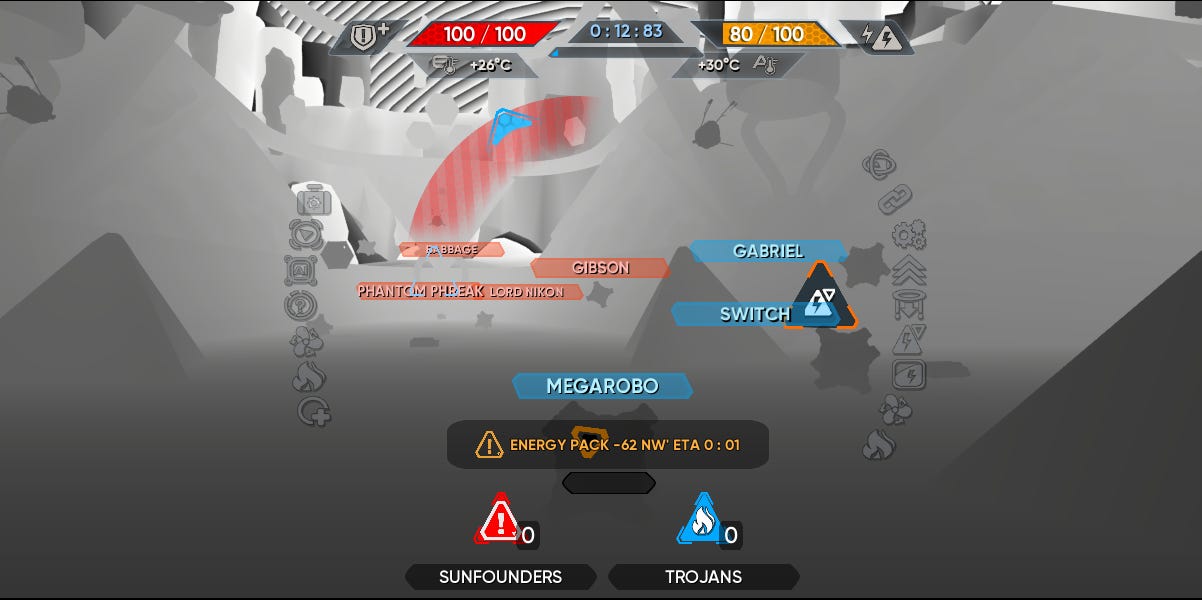

For true learning purposes, each AI has a spatial understanding of playfield in memory, quite the same way a human player would understand it. In this case, the normal vision is assisted with a depth map and normal map of the viewport, to better understand the shapes within the environment through convolutional neural networks

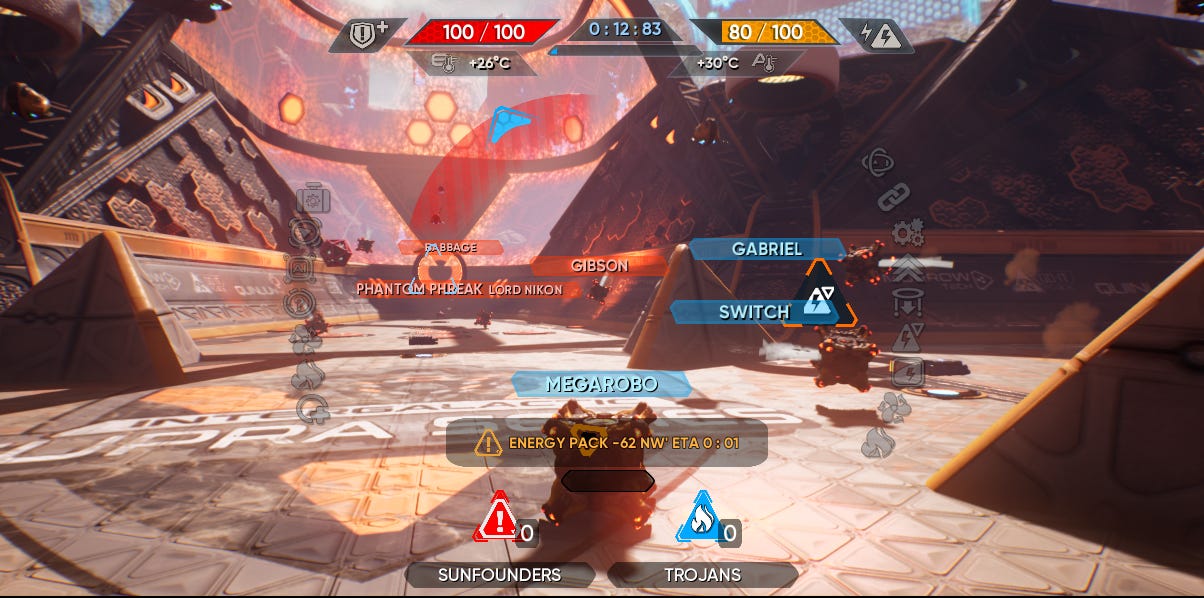

Normal player view in the game

The basic layout of playfield does not change, although certain details may change during play, e.g. which goals are open to score, and some changing obstacles. But otherwise the 3D geometry is the same from one game to another, thus AI can eventually develop tactics and be able to predict where team members and opponents move. Like human players, also AI players may communicate with each other.

Each AI brain uses visual input which is interpreted as a 3D scene through image and shape recognition, and subsequently processed into a more compact form by filtering all redundant and unimportant information in the context of all previously learned data about playfield and its elements. The continuous stream of this data is then used to make decisions based on already learned behavior patterns and new experiments with GAN method.

Depth view of game environment

Normal map view of game environment

AI makes several decisions all the time and sends it as a player input to the game through API which simulates normal human player input. The outcome of each decision is eventually evaluated based on whether it improved the game situation or not. Some of this evaluation is done during the game, and most of it is done after the game when AI evaluates the match in its entirety.

This time I explained the setup and an overall view of the project, but since this is a rather lengthy subject, and I will spare the more intricate details for subsequent newsletters. If you are interested, you may send questions, and I will probably answer them in the next newsletter. Please keep in mind, that there are some details that I cannot reveal - at least not yet -, unfortunately.

Complexity of algorithms, part 2

In the previous newsletter I mentioned the Big O notation, which describes the limiting behavior of a function when the argument n (the input size) grows towards infinity. It is important to understand this theory, as it tells how the scale of the work done in the algorithm grows in the worst case while the input size grows. But it is also important to understand that this is mostly a theoretical measure of a theoretical case.

Theory

Big O notation defines which order bounds the growth of the algorithm as a function of n. For example, let us assume that we have an algorithm that does work according to function

f(n) = 4000 n + 3 log n + 4 n2

Although with very small input size the first term is much larger than other, eventually the last one will outgrow all the others. Thus we say that the order of this function (in Big O notation) is O(n2).

… And Real Development

In real life, there are other factors we have to consider. We do not have infinite input size, but we are also limited by the practical limitations like

- how many operations the CPU can handle in one time unit

- How fast memory access we have (RAM, I/O speed, disk access, etc.), and especially

- What is the time frame we are using

This means that in real life we have to consider every term in the function I did show above, not just the theoretical bounding order.

Time frame is especially important and depends on what is the purpose of the software. For example, when I do data analysis, there is usually no problem if a complex algorithm takes several minutes or even several hours. Everyone knows that analyzing gigabytes or terabytes data will take time. But the games are different. Game is usually run in a cycle of frames, where the status and location of every object is updated every frame and presented visually on the screen. Many modern video games have a very high frame rate, usually more than 60 frames per second (FPS).

If we assume that our target is 60 FPS, it means that we have to successfully run all algorithms 60 times every second. If we cannot, then the FPS will decrease. Based on the speed of CPU and general memory access we will know that theoretically we have a certain amount of operations (P) to use for the game, and not a single operation more. You have to be able to squeeze every tiny sequential operation in that amount. On the other hand, if our target is 120 FPS, we have only P/2 operations to use (theoretically), and if we are satisfied with 30 FPS, we have 2*P operations to use.

Graphics

In modern video games, the graphics are very demanding, and it takes lots of calculations to achieve that realistic 3D presentation of the game world. Although we usually have a dedicated GPU which can handle these calculations, we still have to be very careful when programming the shaders that determine how things look on the screen. If you want more realistic looks and more effects, more complex our shaders will be. Then you have to understand that any shader may be used for a multitude of objects on the screen, and thus any additional operation in the shader is multiplied tens, hundreds or thousand times. Again, all these calculations have to be done within one frame.

This division between CPU and GPU also complicates things, because either one can become a bottleneck. Game is controlled by CPU, but you can choke GPU with too heavy shaders and too complex models, which affects CPU too. And different machines and platforms can be very different. This means that certain things you can do on a powerful gaming PC are impossible on a mobile platform.

Everything is an Algorithm

But in any case, it is important to consider

- how you build the algorithms (game mechanics),

- how you present the data (data structures),

- How complex models you have (number of polygons and vertexes, their shape),

- How complex shaders you have (materials, visual outlook, transparency),

- How many objects (including the environment) you have in the game and

- General settings (object culling, lighting, shadows, etc.)

All these factors affect the underlying algorithms in the game engine itself, video card drivers, etc. In the end, everything done within the computer is one algorithm or another and consumes CPU operations.

Planetrism Data Structures Revisited

In the previous newsletter I explained how I used a hierarchical data structure to organize resource locations in Planetrism, and how it decreased the computational complexity to find the locations nearby. Instead of putting millions of locations in one array, they are sorted into a layered structure of control objects that cover an individual area in the map grid. Each of those objects controls an array of smaller regions etc, until there is a control object for a sufficiently small area, having a bunch of resource objects in an array. You can visualize it as layers of a map grid, where the grid is divided into smaller areas as we descend the data structure.

But in reality, this is not enough. All those resource objects are a bit different. They are of different types, their status varies during the game, some are active, some are used up, etc. Finding the nearest resource object was easy, but what if we want to find out the nearest resource object of a certain type? Suddenly we have to do lots of extra work, because we may have to check them repeatedly. In the worst case, this resource object could be the farthest object on the map. In such a case, we should go through each smaller area, object by object, and we would understand that our hierarchical data structure doesn't help us at all. Unless we make certain changes in it.

We already saw before, that by making our data structure more complex than just a simple array we made searches faster. Now we have to make our data structure even more complex to make more detailed searches faster, too. What we need is more data in each control node. Basically, each control node should have information about what types of resource object it or its descending control objects contain.

If we have a resource object of energy type, then we will add this resource type into an array within its control object. If there are other objects of similar type within the area of this same control object, it is listed only once in the array, of course. All these existing types are replicated in the upper hierarchy. Thus, when we want to find out the nearest resource object of a certain type, the upper hierarchy knows if there exists any resource object of that type in any of its descendants. This way we can always skip those parts of the grid where no such types exist, and always choose the closest region, then the closest sub-region, etc. When we have this kind of indexing in place, our search is very fast again.

So sometimes it pays to make things a bit more complex because that complexity will decrease the amount of work significantly.